This blog post focusses on test automation and is one piece of a larger puzzle involving the craft that is release management.

“Real artists ship.” – Steve Jobs

The products discussed in this blog post is written in Java and Javascript. They are shipped as binaries and installed on premise via repositories. However, prior to building and potentially releasing these packages, test automation executed by the continuous integration (CI) server must pass. This this case, Jenkins. These tests include unit tests, integration tests, configuration tests, performance tests and acceptance tests.

Some of the tests are to be executed on complex setup and at times repeated on permutations varying configurations. Enter Vagrant. Vagrant is used heavily for creating virtualised environments that are cheap and can be easily reproduced. In addition, Vagrant enables the set up of complex network topologies that help facilitate specific test scenarios. The result is that tests are executed on freshly prepped environment and results are reproducible on Jenkins as it is on any developers’ machine.

This journey begins, like all other software journeys, on the drawing board. The problem was that despite having hundreds and thousands of regression tests, executing them independently upon new commits has proven to be tricky, let alone repeating the same test suite over different configurations. Lastly, static code analysis were only performed on developers’ personal setup.

A meeting was swiftly called in and plans for test automation was concocted.

where all ideas grow

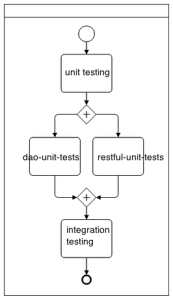

The major flow of the test automation and build pipeline can be broken down into the following.

- src compile

- unit tests

- integration tests

- acceptance tests

- configuration tests

- performance tests

- preview

- demo

- release (manual step)

Note that each of the build step above is a “join” job, capable of triggering multiple downstream jobs. These downstream jobs can run in parallel, or lock resources on a single Jenkins node if needed. The join job can proceed onto the next join if and only if all triggered jobs are executed completely and successfully. For example, unit tests job will trigger multiple independent jobs for testing data access objects, RESTful APIs, frontend and various other components within the system. Jenkins will only move onwards to integration tests if all unit tests pass.

Installing and configuring Jenkins

This is a stock standard Jenkins setup, with the master compiling and unit testing the src. See the Vagrantfile below for full setup.

Required Jenkins Plugins

- git plugin

- build pipeline plugin

- workspace cleanup plugin

- envinject plugin

- join trigger plugin

- throttle concurrent builds plugin

- copyartifact plugin

- instant messaging plugin

- irc plugin

- build-timeout plugin

Note at the time of writing, build pipeline plugin had two bugs that made rendering the pipeline visual really unintelligible. fix was available here https://code.google.com/r/jessica-join-support-build-pipeline-plugin/

Only global configuration are defined for Multi-Project Throttle Categories. This plugin is used as a locking mechanism for shared resources across Jenkins, see https://wiki.jenkins-ci.org/display/JENKINS/Throttle+Concurrent+Builds+Plugin

Automated unit tests

Since Jenkin master will compile the source code, it was then decided to also run the unit tests. All test output are in JUnit format and Jenkins is capable of identifying failed tests and report them appropriately.

Recommendation: Ensuring that all tests can be executed with a single command line, and that test execution order, and setup must be idempotent. Docker can be considered at this point to improve test repeatability and scalability, and avoiding the old “works on Jenkins slaves/masters, but we have no idea what has been installed on it” excuse.

Automated integration tests

Similar to running unit tests, but on a setup closer to supported production environment. Vagrant is used extensively to setup and teardown fresh VMs for running these tests.

Recommendation: Making these tests run via a single command is highly recommend. This makes setup with Jenkins easy and reproducing failed test result on a developer’s setup more reliable. Note that these tests can take a while to set up since it involves installing 3rd party packages with apt-get or yum through Vagrant. At the time of writing, performing vagrant up in parallel has proven to be buggy. The throttle plugin is utlised to lock Vagrant as a single Jenkins resource. Note that depending on the test, it may be possible to use Docker instead of Vagrant. In addition, using AWS EC2 Vagrant plugin may provide access to additional resource to conduct these tests where needed.

Automated acceptance tests

At the time of writing, acceptance tests framework are being developed with Selenium and phantom.js. A mechanism to record user clicks and interactions then replaying them to verify the expected result is critical for this job.

Recommendation: Install packaged binaries on VM from intended repository where possible. This way the full installation process is tested (essentially testing the install guide). Furthermore, my view is that HAR output fits RESTful API testing. Selenium should not be ignored as it tests cross-browser support and provides a simple mechanism for recording user interactions.

Automated performance tests

At the time of writing, automated performance tests are not in place yet. However, my personal recommendation is to incorporate yslow and phantom.js into the build pipeline. See http://yslow.org/phantomjs/

Automated configuration tests

At the time of writing, different dependencies and configurations are being verified manually and not currently automated. It is highly recommended to do so. A provisioning tool such as Puppet, Chef, or Ansible that is capable of generating Vagrantfile based on supported configuration will help provision the environments for these tests. The same acceptance test suite should be repeated on each supported configuration to verify conformity.

Preview & Demo

Make available a freshly built Vagrant box with all dependencies and binaries installed. This is highly recommended of you have vendors or consultants, or just wish to pass on a demo box to prospects. It is also useful for guerrilla user testing.

Release

Release is a manual step to avoid any mishaps. If it was a hosted service, this step should be automated and deployment should occur as new binaries pass through these gauntlet of tests.

Putting it all together

Only thing missing is automated resilience testing and testing for single point of failures. But for now, behold, a sea of green.

sea of green

Vagrantfile to reproduce the Jenkins master

# -*- mode: ruby -*-

# vi: set ft=ruby :

VAGRANTFILE_API_VERSION = "2"

Vagrant.configure(VAGRANTFILE_API_VERSION) do |config|

config.vm.define :jenkins do |jenkins|

jenkins.vm.box = "jenkins"

jenkins.vm.box_url = "http://files.vagrantup.com/precise64.box"

$script_jenkins = <<SCRIPT

echo ===== Installing tools, git, devscripts, debhelper, checkinstall, curl, rst2pdf, and unzip =====

sudo apt-get update

sudo apt-get install git -y

sudo apt-get install unzip -y

sudo apt-get install devscripts -y

sudo apt-get install debhelper -y

sudo apt-get install checkinstall -y

sudo apt-get install curl -y

sudo apt-get install rst2pdf -y

echo ===== done ====

echo ===== installing ruby1.8 and gems =====

sudo apt-get install ruby1.8 -y

wget http://production.cf.rubygems.org/rubygems/rubygems-2.0.7.tgz

tar xvf rubygems-2.0.7.tgz

cd rubygems-2.0.7/

sudo checkinstall -y ruby setup.rb

echo ===== done ====

echo ===== Installing compass and zurb-foundation =====

sudo gem1.8 install compass

sudo gem1.8 install zurb-foundation

echo ===== done ====

echo ===== Installing compass and zurb-foundation =====

sudo apt-get install openjdk-7-jdk -y

sudo apt-get install ant -y

echo ===== done ====

echo ===== installing jenkins =====

cd ~

wget -q -O - http://pkg.jenkins-ci.org/debian/jenkins-ci.org.key | sudo apt-key add -

echo "deb http://pkg.jenkins-ci.org/debian binary/" | sudo tee -a /etc/apt/sources.list

sudo apt-get update

sudo apt-get install jenkins -y

echo ===== done ====

echo ===== installing mongodb =====

sudo apt-get install mongodb -y

echo ===== done =====

echo ===== install nodejs and npm via nvm very hackish unless we fix it =====

curl https://raw.githubusercontent.com/creationix/nvm/master/install.sh | sh

source /root/.profile

nvm install v0.10.18

nvm use v0.10.18

npm -v

node -v

n=$(which node);n=${n%/bin/node}; chmod -R 755 $n/bin/*; sudo cp -r $n/{bin,lib,share} /usr/local

sudo -s which node

echo ===== done =====

echo ===== Installing grunt-cli =====

sudo npm install -g grunt-cli

echo ===== done ====

echo ===== virtualbox and vagrant =====

cd ~

sudo apt-get install virtualbox

wget http://files.vagrantup.com/packages/db8e7a9c79b23264da129f55cf8569167fc22415/vagrant_1.3.3_x86_64.deb

sudo dpkg -i vagrant_1.3.3_x86_64.deb

echo ===== done =====

echo ===== install jenkins-jobs ===

cd ~

git clone https://github.com/openstack-infra/jenkins-job-builder

cd jenkins-job-builder/

sudo python setup.py install

echo ===== done =====

SCRIPT

jenkins.vm.provision :shell, :inline => $script_jenkins

jenkins.vm.network "forwarded_port", guest: 8080, host:38080

end

end